How to Find Honest Reviews of Business Software Before Everyone Else Does

- Ononkwa Egan

- 2 days ago

- 6 min read

There is a particular kind of frustration that comes from adopting a software tool six months after the early adopter pricing disappeared, the onboarding experience got complicated, and three competitors already built their workflows around it. You get the same product, but you missed the advantage.

The businesses that consistently make better technology decisions are not necessarily smarter or better resourced. They are better informed, earlier. They have figured out where to find genuine, unfiltered feedback on tools that are still early enough to matter, before the product marketing machine drowns out the honest signal with polished testimonials and case studies.

Here is where that signal actually lives.

Why Early Adopter Reviews Are Different From Everything Else

Most of the software reviews you encounter online were written after a product matured, the bugs got fixed, the pricing stabilised, and the support team scaled up. They tell you whether a tool is good in its current form, which is useful but not particularly strategic.

Early adopter reviews tell you something more valuable: whether a tool is worth the friction of being first. They reveal what breaks under real use before the company has enough customers to find and fix the problems. They tell you whether the integration promises hold up when someone actually tries to connect the tool to Salesforce or their existing payment infrastructure. And critically, they often reveal the ROI signal early, before the pricing model changes to capture more of the value being created.

The question is not whether these reviews exist. They do, in abundance. The question is where to find them.

Review Aggregators: Start Here, Filter Carefully

G2 is the most useful starting point for enterprise and professional software research, but only if you know how to filter it properly. The aggregate rating on a mature product with thousands of reviews tells you little that is actionable. What tells you more is finding tools with low review counts, high ratings above 4.5, and reviews specifically from companies with substantial operational complexity. That combination frequently signals a product that is genuinely performing well but has not yet been discovered by the broader market.

The filter that most people miss is the date filter. Reviews from the past six months on any platform are qualitatively different from older reviews, both because they reflect the current version of the product and because they disproportionately represent early adopters who deliberately chose to try something new. Capterra and TrustRadius both reward this kind of filtered reading, particularly for detailed technical feedback from IT professionals and operations teams who are less susceptible to marketing influence than general business users.

SoftwareReviews goes deeper into enterprise adoption trends and product satisfaction scores in ways that are particularly useful for tools being evaluated for organisation-wide deployment. Looking for products tagged as new or in beta within these platforms is one of the most efficient ways to surface what is emerging before it becomes obvious.

Beta Testing Platforms: Unfiltered and Genuinely Useful

If review aggregators give you structured feedback on products that have reached some level of maturity, beta testing platforms give you something rawer and often more revealing: first impressions from people who encountered the product when it was still in its rough stages.

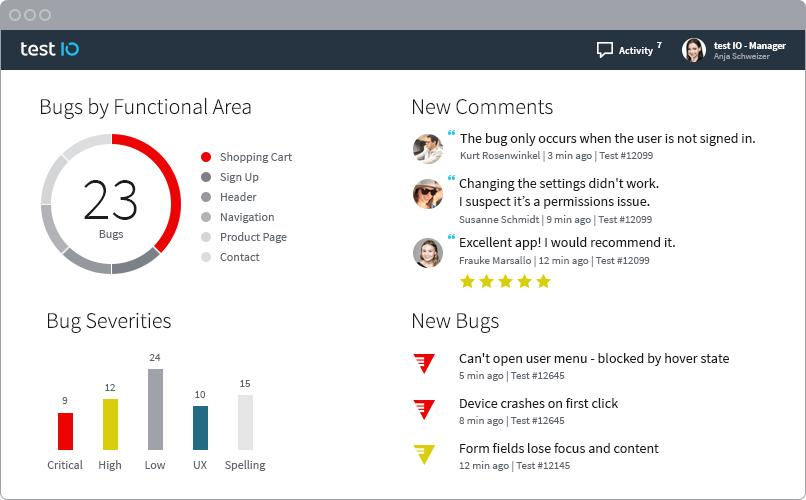

BetaTesting publishes real user sessions, bug reports, and first impressions from testers who had no relationship with the product before the engagement. The feedback tends to be more honest precisely because it lacks the post-purchase rationalisation that often softens negative reviews on mainstream platforms. People who paid for something and integrated it into their workflow are psychologically motivated to find it better than it is. Beta testers have no such motivation.

Centercode serves a more enterprise-focused audience, with structured stakeholder feedback and AI-driven insights into usability and adoption metrics. For evaluating tools that are targeting enterprise buyers, the feedback quality here is particularly strong.

BetaList is specifically designed to help discover newly launched SaaS tools still in their validation phase. The early user comments on BetaList capture a moment in a product's development that most review platforms miss entirely: the period when the founding team is still actively shaping the product in response to real user feedback, and when the gap between the product's current state and its potential is most visible.

Vendor Beta Programmes and Private Communities

This is where the most valuable and least accessible feedback lives, which is precisely what makes it worth pursuing.

Most serious software companies run Early Access Programmes for selected users who test products before public launch. The feedback generated in these programmes is unfiltered in a way that nothing on a public review platform can match, because participants know their feedback will directly influence the product and have no commercial incentive to be anything other than honest. The challenge is finding these programmes.

The most reliable routes are to check product websites directly for beta or early access options, join the Slack or Discord communities that most SaaS companies now maintain for their user bases, and monitor private forums where operators and founders share recommendations. When a product like GitLab or Smartsheet opens early access to a new feature, the community discussion that follows is often more information-dense than anything that will ever appear on G2.

The broader point is that the communities where operators genuinely share what is working in their businesses, rather than what they want to be seen using, are where the most actionable software intelligence is generated. Reddit communities like r/SaaS and r/enterprise, LinkedIn discussions among operators who have actually deployed the tools they write about, and niche founder communities all produce this kind of signal. The search terms that surface it most reliably are specific: "early access review" combined with a tool name, or "beta feedback" combined with a software category.

Newsletters and Curated Discovery Platforms

The signal-to-noise problem in software discovery is real and getting worse as the volume of new tools increases. Curated newsletters that specifically track emerging and beta-stage tools serve a genuinely valuable filtering function: someone with the expertise and network to identify promising tools early is doing the discovery work on your behalf.

The best of these newsletters combine beta invites with early commentary that gives you a sense of whether a tool is worth your attention before you spend time evaluating it yourself. They function as a first-pass filter that lets you focus your evaluation energy on the tools most likely to be relevant, rather than processing every new launch indiscriminately.

How to Know Whether an Early Tool Is Worth Adopting

Not everything new is worth being early for. The filtering question is whether the tool's early performance signals genuine problem-solving or just interesting technology looking for a use case.

The markers that matter are consistent across contexts. Verified reviews from people in real operational environments at mid-size to large companies carry more weight than reviews from individuals or very small teams, because operational complexity reveals product gaps that simple use cases do not. Integration depth is a telling signal: a tool that connects well with the CRM systems, payment platforms, and internal workflows that businesses already depend on is more likely to achieve genuine adoption than one that requires building around it.

Consistent early feedback patterns are more meaningful than individual reviews. When multiple independent reviewers with no connection to each other describe the same specific benefits, such as saving time on a particular task, eliminating a specific type of error, or making a particular workflow faster, that convergence is a reliable signal. When the same specific complaints appear repeatedly across independent reviews, that convergence is equally meaningful in the other direction.

The ROI signal is the most commercially important marker. Early adopters who describe concrete productivity gains, cost savings, or faster workflows are providing evidence of genuine value creation rather than just enthusiasm for novelty. A tool that multiple early users describe as having changed how their team works is worth serious evaluation. A tool that multiple early users describe as interesting but are not yet sure how to use is probably not.

The Shift That Makes Honest Reviews of Business Software Useful

The most common use of software reviews is confirmatory: someone has already decided to consider a tool and uses reviews to build confidence in that decision. This produces adequate decisions but not strategic ones.

The more valuable use of honest reviews of business software is prospective: using early review signals to identify what tools are about to become important before they become obvious. The businesses that noticed the early signals around tools like Notion, Slack, and Monday.com before they became ubiquitous had time to adopt, integrate, and build workflows around them while competitors were still discovering they existed.

That timing advantage compounds. A team that has been using a genuinely better tool for twelve months before competitors adopt it has not just the tool advantage but the institutional knowledge advantage of a year's worth of learning how to use it well.

The infrastructure for finding these signals early is available to anyone willing to look systematically. The places described here are not obscure or difficult to access. What separates the businesses that consistently make better technology decisions is simply the habit of looking in the right places, earlier, with the right filters.

The tools worth adopting early are signalling their potential right now. The question is whether you are paying attention.

Comments